Image credit: Pexels

The rapid adoption of artificial intelligence has rapidly changed workplace expectations. Specialized technical skills that once were limited to specific fields have become essential competencies across all business sectors today. The use of AI tools is no longer limited to engineers and data scientists; employees across industries are using them to streamline their work. This has changed the requirements for the right candidate, as organizations are prioritizing AI literacy.

AI Literacy as a Mindset, Not a Skillset

For leading tech companies, AI literacy is less a technical checklist and more a way of thinking. Instead of emphasizing formal training or certifications, these businesses are looking for candidates with curiosity, a willingness to experiment, and the ability to adapt to uncertain environments.

At Pigment, this change is more pronounced. The company is re-evaluating the way its employees work in this AI-driven landscape. The focus is not just on mastering tools, but also on developing a mindset that embraces change and continuous learning.

Kenneth Shen, CEO & Founder of Pigment, explains, “I think right now, AI literacy is a mindset challenge, not a technical one.”

Shen expands on this idea by pushing back against the assumption that AI literacy requires formal training. “AI can teach you anything if you ask it. It’s literally, by definition, intelligence, and that intelligence is accessible to anyone” he explains.

Rather than encouraging structured learning paths, Shen emphasizes experimentation. “Run experiments with AI. Work with it. Notice what you’re trusting it with versus what you’re trusting yourself with, and push that edge,” he says. This approach shifts the focus from mastering tools to developing judgment around them.

For Shen, the distinction between average and highly AI-literate individuals comes down to that boundary. “The people who are the most AI fluent are the ones who have figured out what to trust it with and what not to trust it with,” he notes.

He also cautions against over-reliance on structured education in this space. “Don’t go buy a course. People are preying on the feeling that you need to learn it,” Shen adds, pointing instead to curiosity and initiative as the real drivers of capability.

In practice, AI should be treated less like a tool and more like a collaborator. “I use it as a sparring partner. I don’t let it do all my thinking, but I let it expand my thought horizon,” Shen says.

There is an apparent pattern that emerges. AI literacy is not about delegation, but about how effectively someone can think alongside the technology.

AI as a Performance Multiplier, Not a Replacement

Initially, the growing use of AI tools sparked fears of job displacement, but this perception is now changing. Many companies are positioning AI as an assistant to the human workforce to enhance their productivity without replacing their roles. The emphasis is on using AI to amplify output and unlock new efficiencies.

At Onward, this philosophy is embedded in daily operations. Employees are expected to incorporate AI into their routines, treating it as an essential component of how work gets done.

Jerry Smith, CEO & Co-Founder of Onward, notes, “It’s table stakes. It’s expected.”

This expectation signals a shift in baseline performance standards. Employees who fail to integrate AI into their work risk falling behind.

Smith makes it clear that AI usage is no longer seen as a differentiator in hiring or performance. “It’s not anymore being like, ‘good job using AI.’ It’s no longer that exciting that you know how to use ChatGPT or Claude. What’s more significant is can you get outcomes that move the needle,” he says. Results—not tools—are what matter.

This expectation has reshaped how work is approached internally. Employees are encouraged to apply AI to real scenarios rather than hypothetical use cases. “What is the real client? What is the real problem you’re trying to solve?” Smith asks.

At Onward, AI adoption is structured into operations. “We have a weekly meeting that’s all about AI tools and adoption, where teams share how they’re using them. This creates a feedback loop where usage is not only encouraged but continuously refined,” Smith illustrates.

For the team at Onward, AI is never used as a replacement for employees. “It makes employees five to ten times more efficient and effective,” Smith says, positioning AI as a performance multiplier rather than a cost-cutting mechanism.

This mindset carries into hiring. “We ask candidates, ‘How did you use AI in this interview?’” Smith notes. However, depth matters. “I don’t want one click, throw it in ChatGPT, give me an answer,” he adds.

As AI becomes more dominant in workflows, superficial use is easy to identify. “You can tell when someone is reading a script… and when you go one level deeper, they can’t answer it,” Smith says. At the end of the day, genuine understanding wins over polished outputs.

The Rising Importance of Judgment and Critical Thinking

As AI tools become more accessible, the ability to evaluate their outputs is emerging as a critical skill. AI can generate insights and recommendations at scale, but it still requires human judgment.

Over-reliance on AI carries risks, including shallow analysis and flawed decision-making. A clear understanding of context and consequences is imperative so that employees don’t accept outputs at face value, which can lead to difficult-to-detect errors.

Coleman Technologies Inc. highlights this point by focusing on strategic AI use. The company stresses that true AI literacy involves not just using tools, but understanding when and how to question them.

Darren Coleman, CEO of Coleman Technologies Inc., emphasizes that AI literacy means using tools strategically while maintaining human judgment to validate outputs and understand real-world consequences.

At the company, AI isn’t seen as a standalone capability, but as an amplifier. “It’s not really a skill… it’s an amplifier of what you already have,” Coleman explains, cautioning that it does not improve underlying thinking.

In fact, one could argue that the opposite effect can occur. “It doesn’t make you smarter. It just makes some things more visible,” Coleman says, pointing to how AI can expose gaps in reasoning rather than mask them.

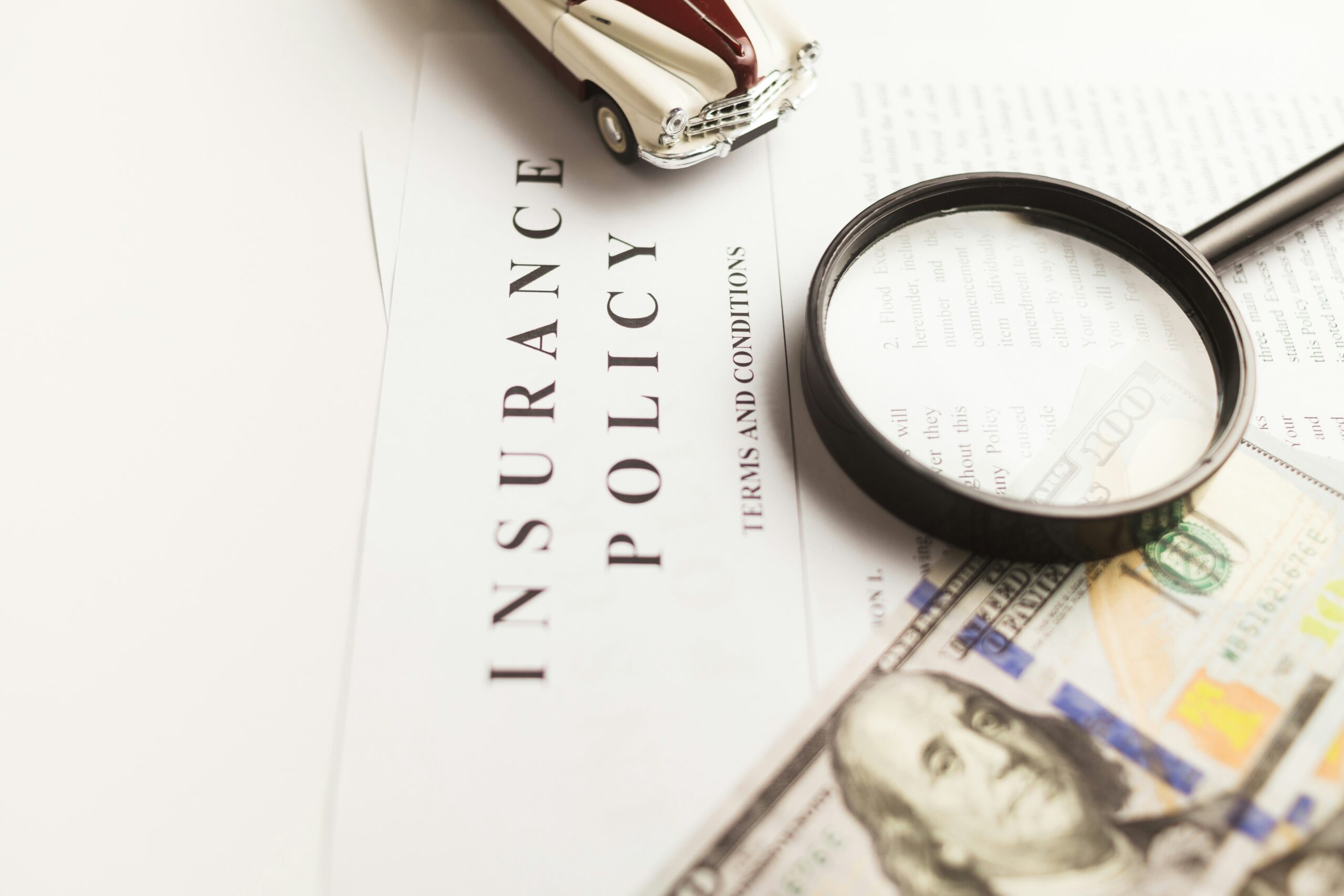

One of the most common issues he observes is uncontrolled adoption within organizations. “People are using AI because it’s fast, but they’re doing it without policy, without visibility, and without leadership knowing,” Coleman notes.

This lack of oversight can introduce serious risks, particularly with sensitive data. Coleman illustrates, “If one team member uploads a case study into a free AI tool… it’s now on a third-party server, outside your privacy policy.”

The root problem is not the technology itself, but how organizations approach it. “Companies don’t fail because they chose the wrong tool. They fail because they chose the tool before the outcome,” Coleman explains. This perspective also shapes how he evaluates talent. “Employees must challenge the AI, not blindly trust it.”

Ultimately, judgment must be prioritized over knowledge. “I don’t need someone who knows everything. I need someone who can find the answer and understand if it’s correct,” Coleman adds.

Hiring for Adaptability

In this evolving landscape, adaptability is becoming a defining trait. Companies are moving away from rigid, experience-based hiring models to a more dynamic assessment of potential. The ability to “unlearn” outdated practices and embrace new methods is now highly valued.

This shift is also influencing interview processes. Employers increasingly expect candidates to use AI tools during preparation, but they also look for evidence of original thinking. Surface-level responses generated by AI are easy to identify. The ability to go deeper, challenge assumptions, and articulate independent insights can help candidates stand out.

Practical Takeaways for Job Seekers and Employers

For job seekers, simply using AI is not enough. They need to demonstrate how they use it beyond basic outputs by applying critical thinking to refine results. For employers, the challenge lies in setting clear expectations. Establishing AI policies and guardrails can help ensure responsible use while encouraging innovation. Hiring strategies should also prioritize cognitive flexibility and sound judgment over narrow technical expertise.

Final Thoughts

AI literacy has moved from a competitive advantage to a baseline expectation. Across industries, the ability to work effectively with AI is becoming a prerequisite for success. Yet the defining edge lies not in access to tools, but in how individuals think, adapt, and collaborate with them. Even as hiring models evolve, human judgment remains indispensable in the era of AI.